After you take a picture, the film paper has an invisible picture on it which is called the latent image. To make that picture visible, you place the film into the developer bath. This chemical solution's job is to target the parts of the film that were hit by light and turn them dark. Specifically, the chemicals in the bath change the light-struck silver material into pure black silver, which creates the image. Controlling how long the film stays in the bath is very important, as this determines how dark and detailed the final picture will be. This experiment aims to provide a hands on experiment with the process of film developing, without the need to enter a dark room or to interact with chemical solutions.

To create a Touch-Visual-Audio interface that ignites people’s curiousity and interest in the craft of film photography and printing.

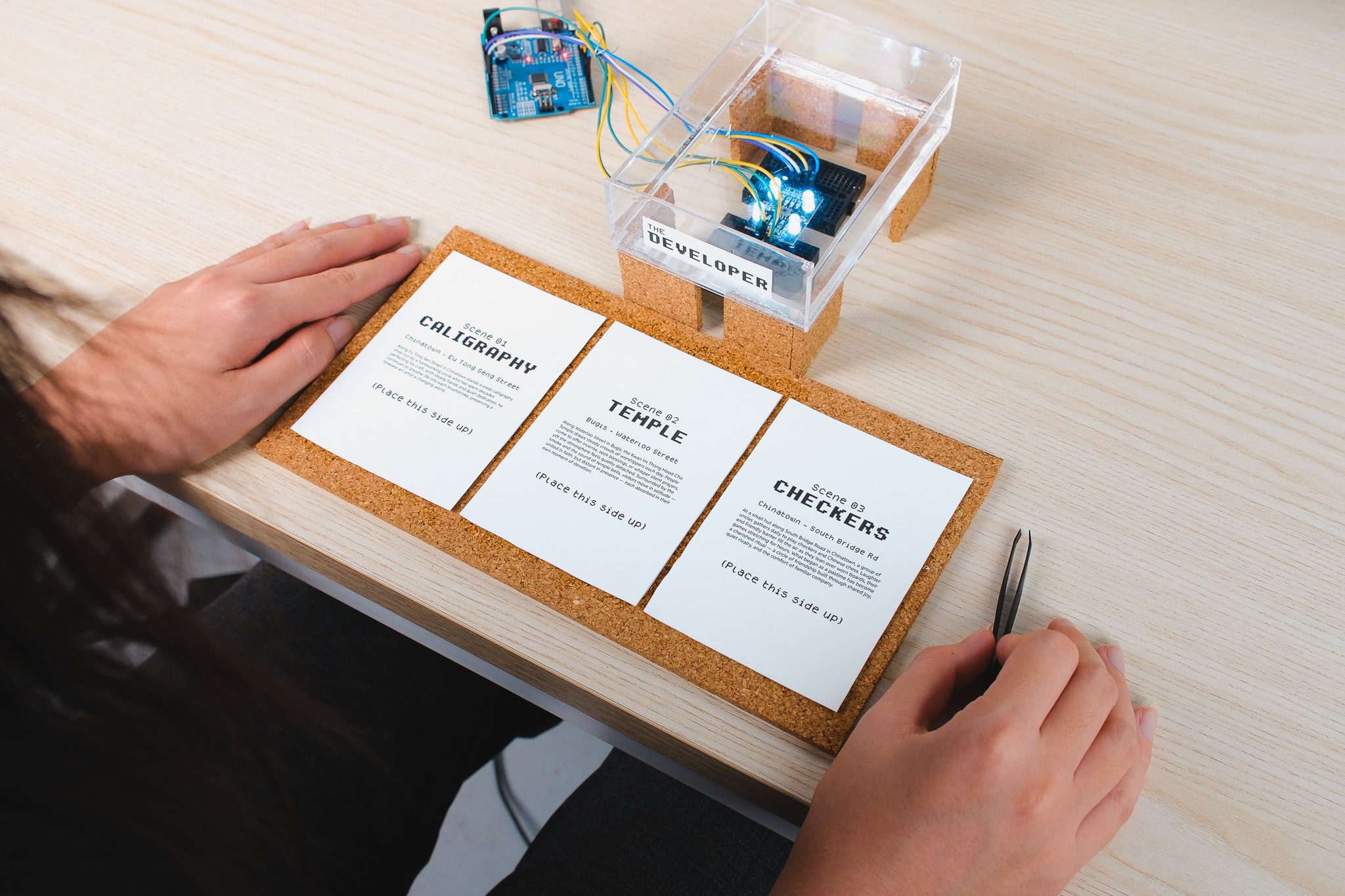

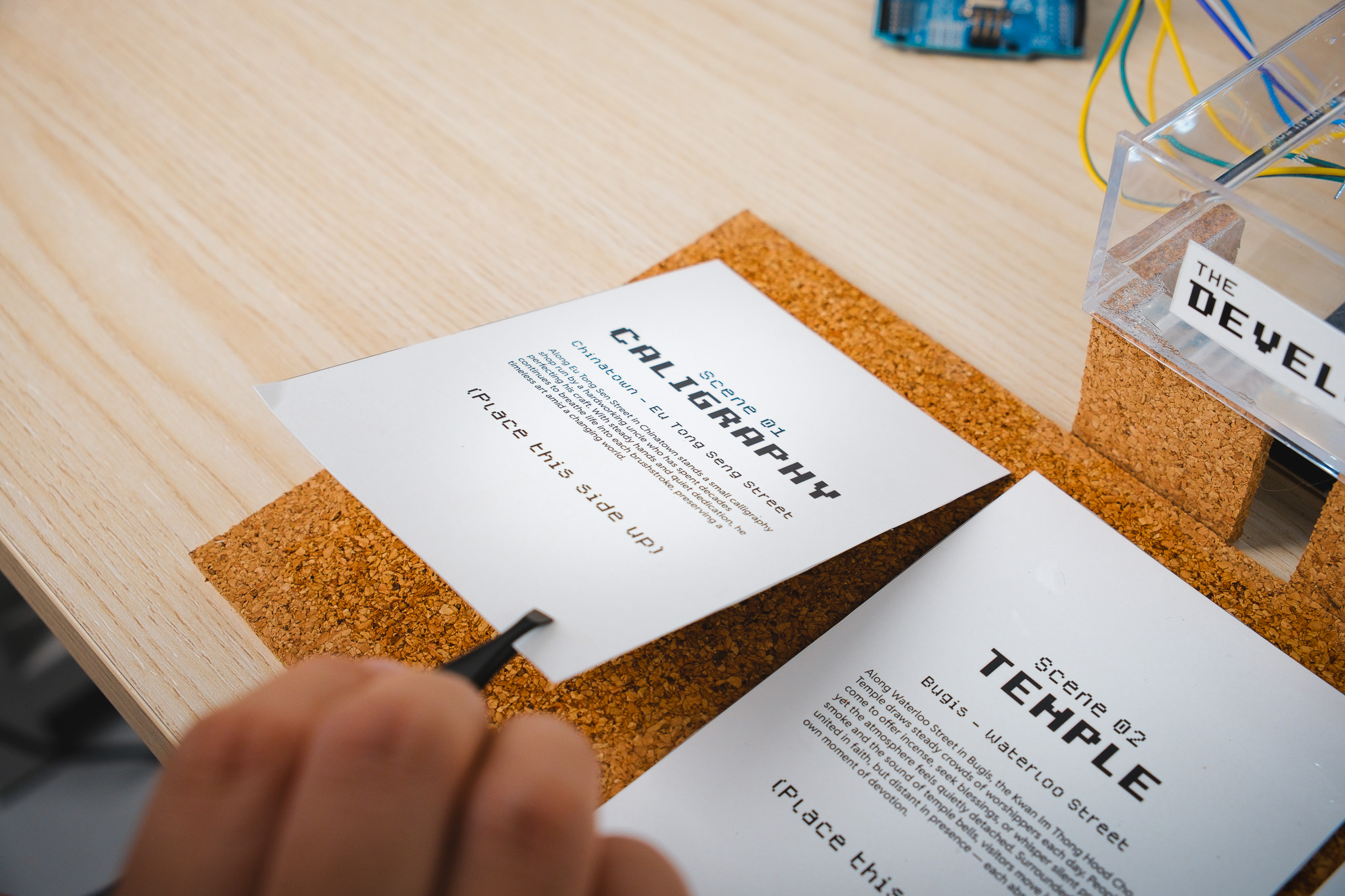

Users choose one of three film papers with text descriptions of the image it contains and place it in the developer tray. A color sensor reads the film and triggers TouchDesigner visuals and audio as the image and its soundscape gradually develop.

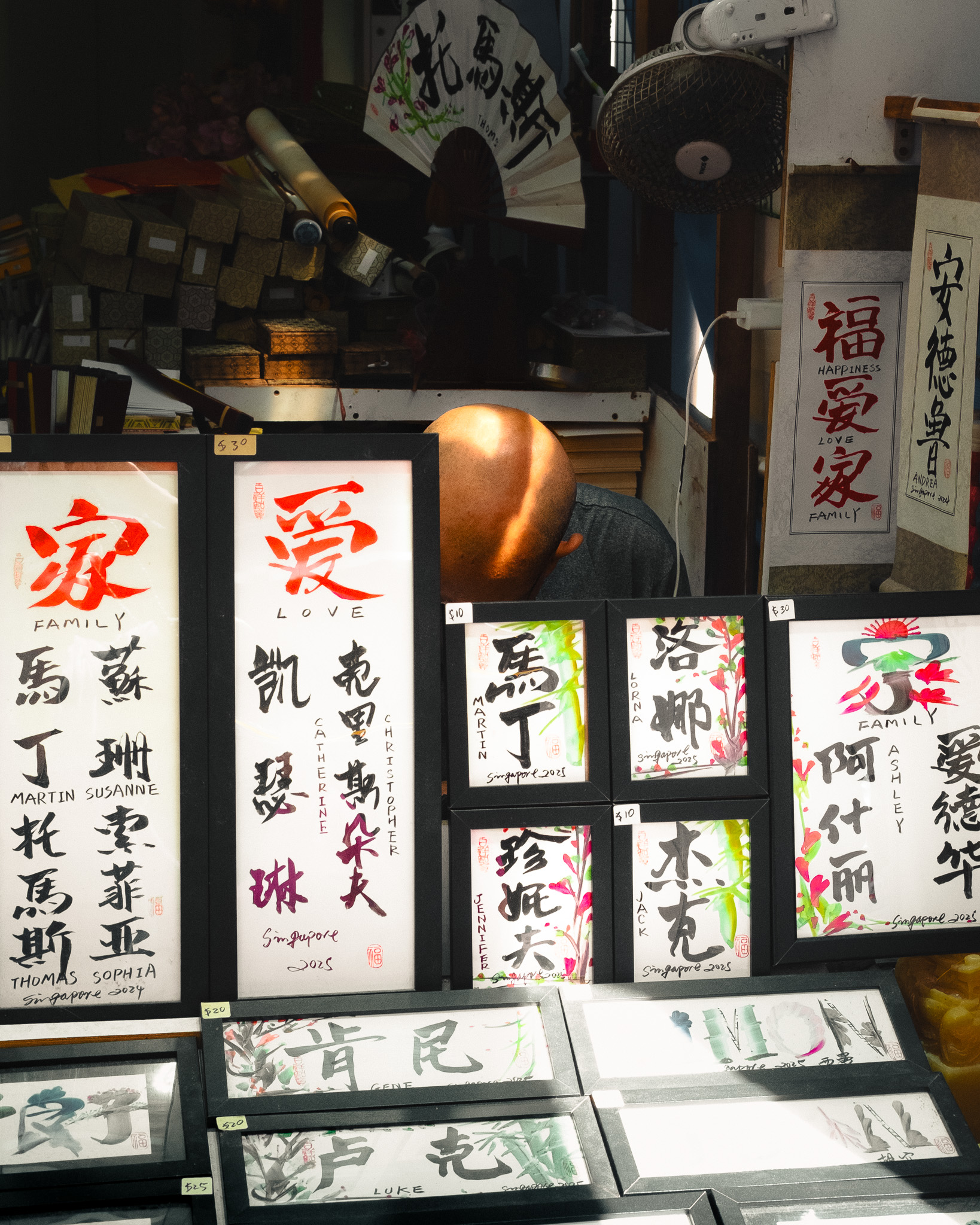

During one of my photowalks, I ventured along the streets of Chinatown and Bugis to visit areas which packs plenty of heritage and history (I.E temples and caligraphy shops). I snapped pictures with the goal to capture people interacting with these places of heritage.

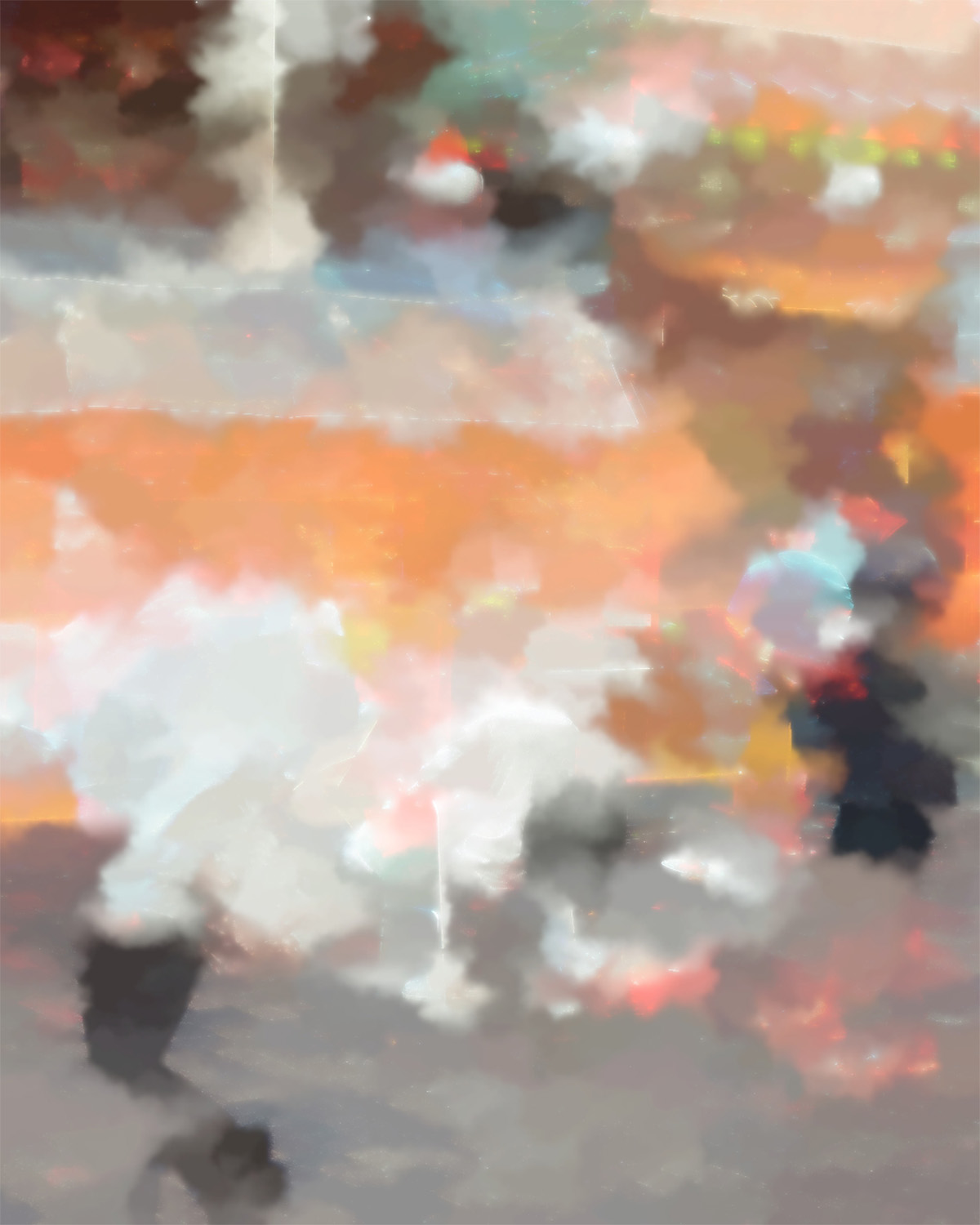

I created a watercolor visual using the image I took as the source input (done in TouchDesigner). Since the film paper is placed within the developer liquid, it made sense for the visual to take this form. Later on, the color data extracted from Arduino will be used to adjust parameters within this visual, allowing it to create a visual effect of these watery pixels coming together to form the image, simulating film development.

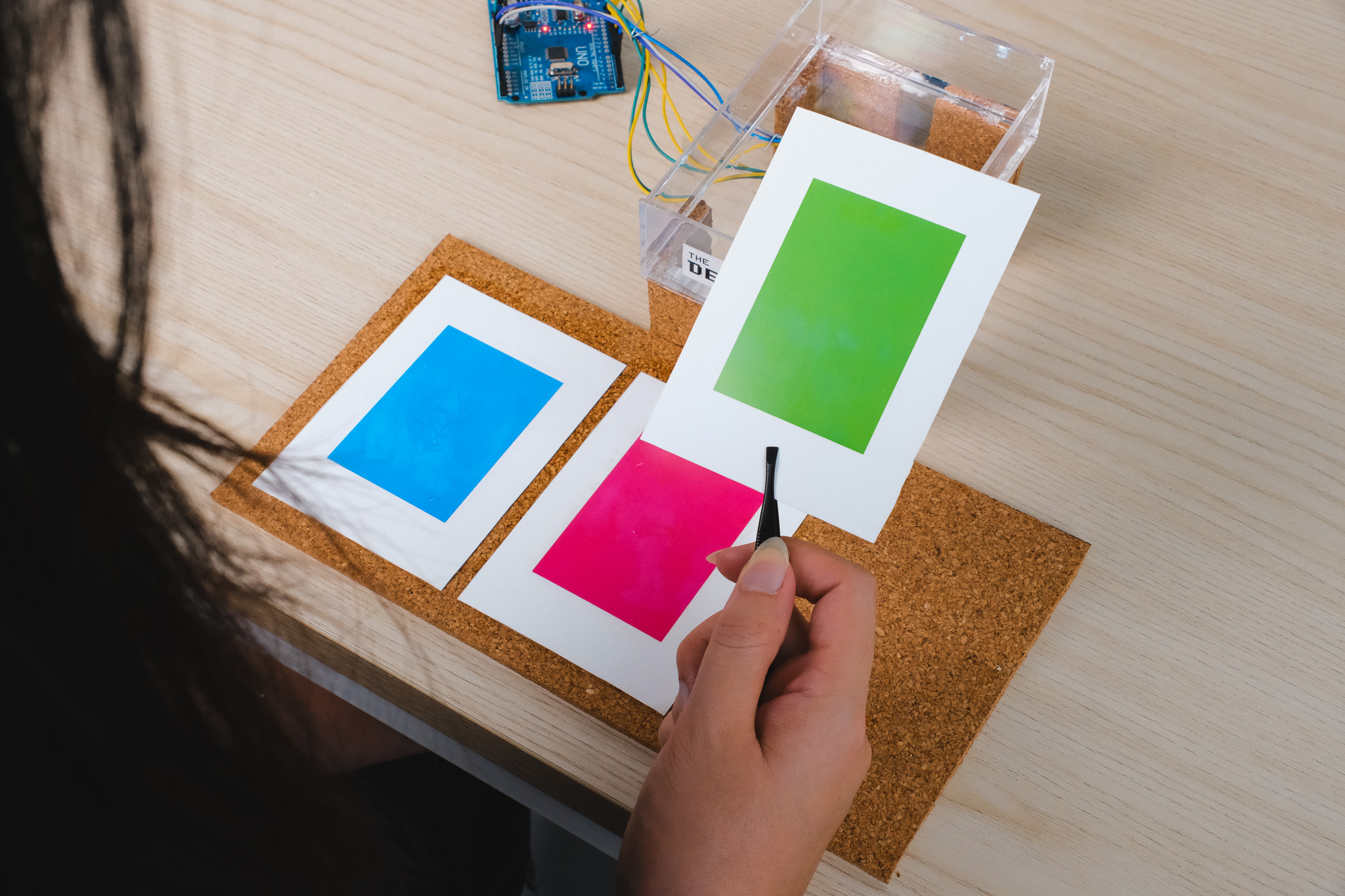

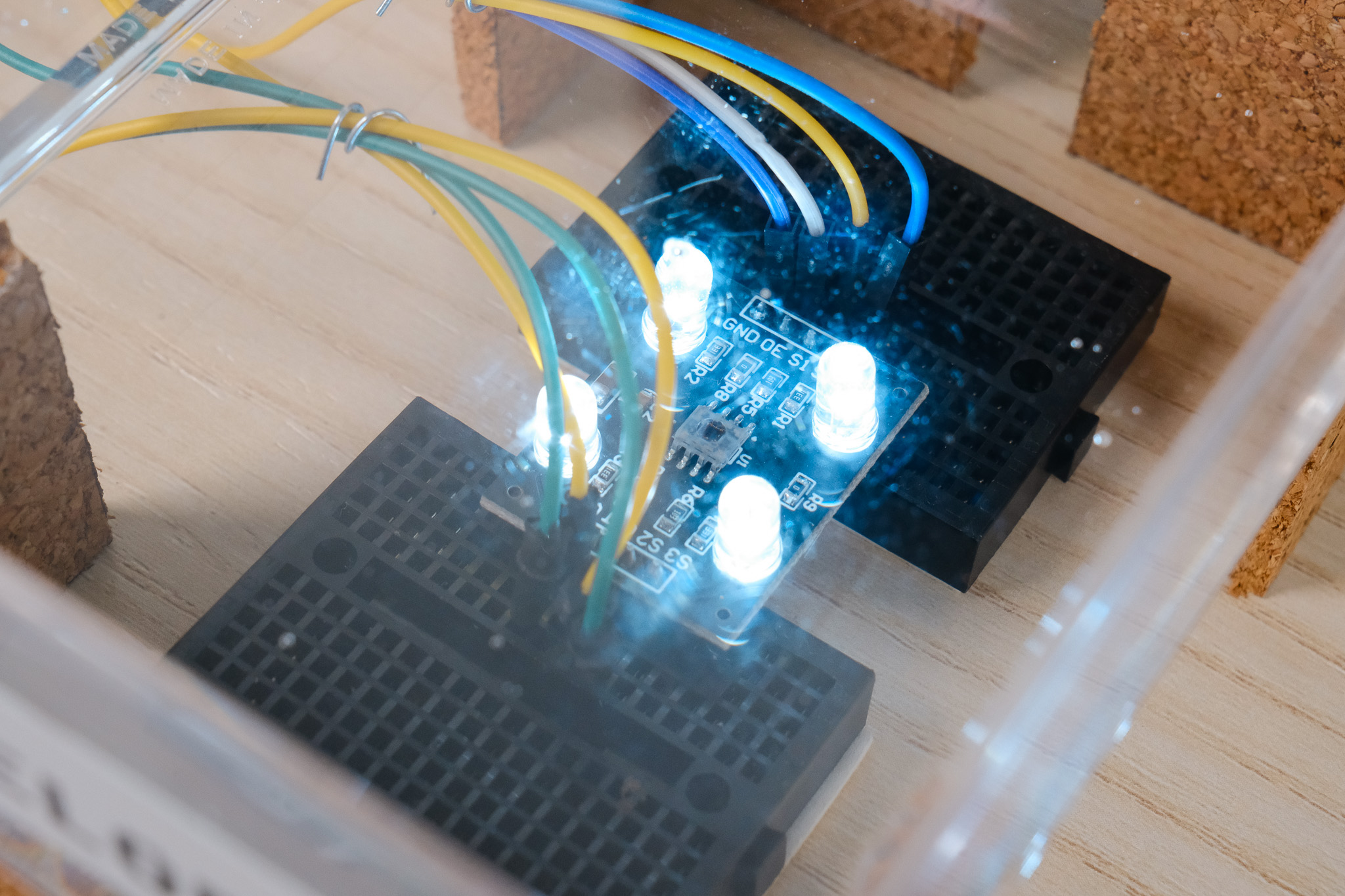

Behind each film paper is 3 different colors, Red, Green and Blue. I placed a color sensor below a transparent developer tray to detect these different colors once the film paper is placed above text side up. Once detected, Arduino will send data to TouchDesiger, which is then normalised and mapped to trigger visual changes on screen. The soundscape is also activated to turn on using the color data.